What’s the difference between a customer service agent who greets people with “I’m happy to help you” and an AI chatbot that says the same thing? This might sound like a trick question, but it’s not: the difference is that an AI chatbot can’t actually be happy. But isn’t it nice to hear anyway? New research shows otherwise, determining that AI-expressed positive emotion can even produce a negative effect on customer experience. Maybe the bots aren’t taking over after all.

Subscribe:

Logically everyone knows that software doesn’t have feelings, but AI chatbots that express emotion—as well as other advanced artificial intelligence tools like Google AI’s chatbot and ChatGPT—have a sentient quality that places them somewhere between machine and human. Conventional customer service wisdom shows that when human employees express positive emotion, customers give higher evaluations of the service. But when emotionally expressive chatbots enter the equation, people’s reactions change depending on their expectations. Whether someone is looking for information on a product, needs to make a simple transaction, or wants to lodge a complaint can make all the difference to a positive or negative experience with a cheerful chatbot.

New research by Desautels Faculty of Management professor Elizabeth Han investigates the effects of AI-powered chatbots that express positive emotion in customer service interactions. In theory, making software appear more human and emotionally upbeat sounds like a great idea, but in practice, as Professor Han’s research shows, most people aren’t quite ready to make a cognitive leap across the uncanny valley.

Delve: Artificial intelligence tools that interact with people have become extremely commonplace in our daily lives in the past few years, with hundreds of companies deploying AI-powered chatbots on their websites as a first line of user or customer communication. What did you observe about these AI tools and their use that compelled you to embark on the research discussed in your paper “Bots with Feelings: Should AI Agents Express Positive Emotion in Customer Service?”

Elizabeth Han: Artificial intelligence technology is pretty old; it came about decades ago, but I think the reason why it has been talked about so much recently is that, first, it became more like an interactional partner with people. And second, it literally permeated our daily lives. Because of those two reasons, people are now starting to perceive it as a new entity, not a person but something new, an entity you can react to, respond to, talk with, interact with. That is interesting perspective for me because AI is increasingly doing the job of interacting with users. What happens when we bring something that is very unique to humans into this interaction between AI and humans? Emotion is extremely unique to humans. So what happens if we imbue those emotional capabilities in AI-driven tools? How people will respond to those emotional capabilities?

Delve: What are the current benefits of imbuing these interactive AI tools with emotions, whether they’re primarily text-based, voice-based or otherwise public-facing? What is their purpose from an organization’s point of view?

Elizabeth Han: The primary purpose of imbuing those emotions is essentially to mimic the actual human-to-human interactions. This emotional AI will be especially useful in an area where this interaction aspect is extremely important, for instance, mental health. There are a lot of online education platforms, and there are AI coaches, teachers, and so on. In any area in which emotional aspects of the interactions are important, these emotional AIs can be useful. But now the key question is, can it be useful in general contexts? Not only those specific interactions in the area where this emotional interaction is important, but in a general sense, like customer service. That’s how our research addresses that question.

People are now starting to perceive AI as a new entity, not a person but something new, an entity you can react to, respond to, talk with, interact with.

Delve: In your experiments and in similar real-world chatbots interactions, there are certain contexts where these AI tools have specific or customized uses – but can these AI tools be applied in a broader sense? Such as where there are more unknowns about the circumstances and the users – and where people expect responses right away. Thinking about our reactions to chatbots that express emotion, especially our reactions to positive emotions, as your paper focuses on, people’s reactions depend on the situational context but also depend on the person, of course. Different people are simply going to react differently, in the same way that occurs in human interactions. Figuring out why people react differently to chatbots than to humans is a new and fascinating question. What did you find out about that through your research?

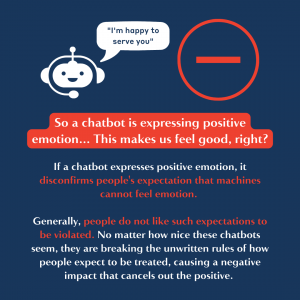

Elizabeth Han: Thanks for pointing out that our research is focusing on positive emotional expression because we are restricting our context in which that expressed emotion is appropriate—because if it’s not appropriate, it would have a huge consequences. That will be a separate research topic. We are only focusing on those appropriate positive emotions, which is pretty common in human-driven service interactions. People just greet with, “I’m happy to serve you,” for example. That’s the expression of positive emotion.

In prior literature on customer service, this positive emotional expression by human employees has been in general beneficial. And there are many possible reasons why it’s beneficial, but one mechanism under that was this emotional contagion. So essentially, if I express positive emotion, even by observing that expression, you can feel the positive emotion because it’s kind of an unconscious process. So that was one mechanism behind the impact of humans express positive emotion. We found that it actually also happens in the chatbot-expressed emotion, which is pretty interesting. Because it’s indicating that this unconscious affective process can be transpired to the case of chatbot-expressed emotion.

What’s interesting is that there is another competing mechanism, which is negative. So ultimately, it’s going to cancel out that positive impact by emotional contagion. And that mechanism is called “expectations as confirmation.” It’s basically how a certain event violates people’s expectation. People in general, we don’t like our expectations to be violated, right? Because it’s going to cause some cognitive dissonance, and so on.

We found out that if a chatbot expresses positive emotion, it disconfirms people’s expectation. And what kind of expectation? The expectation that machines cannot feel emotion. How can they express emotion when they cannot feel the emotion? There’s this cognitive dissonance coming from that violation of expectation, and that’s actually causing a negative impact on the customers’ evaluation of the service. It’s like those two competing mechanisms cancel each other out.

Delve: Businesses and other organizations are spending billions on AI investment right now. And they are tracking data and doing surveys, as you point out, but how can your academic research and other research in this area make an impact on their decision making around emotional AI?

Elizabeth Han: The reason why companies are trying to deploy emotional AIs is because they want it to be beneficial. They want to mimic those human interactions, and they assume that bringing in those emotional AIs can always enhance the customer’s evaluation. But our research actually indicates that it’s not necessarily beneficial. And depending on the customers, it can even backfire. So from the company’s perspective, it’s really important to recognize what type of customer you’re interacting with. And that could be possible based on maybe historical conversation with certain customers, or even by analyzing the real time conversation with the customer. So the core lesson is that this chatbot should be context aware. And it should express emotion only to the right customer at the right timing at the right context. I think that’s a key message our research is trying to give.

For further insights and information, listen to the full interview with Elizabeth Han on the Delve podcast.

This episode of the Delve podcast is produced by Delve and Robyn Fadden. Original music by Saku Mantere.

Delve is the official thought leadership platform of McGill University’s Desautels Faculty of Management. Subscribe to the Delve podcast on all major podcast platforms, including Apple podcasts and Spotify, and follow Delve on LinkedIn, Facebook, Twitter, Instagram, and YouTube.